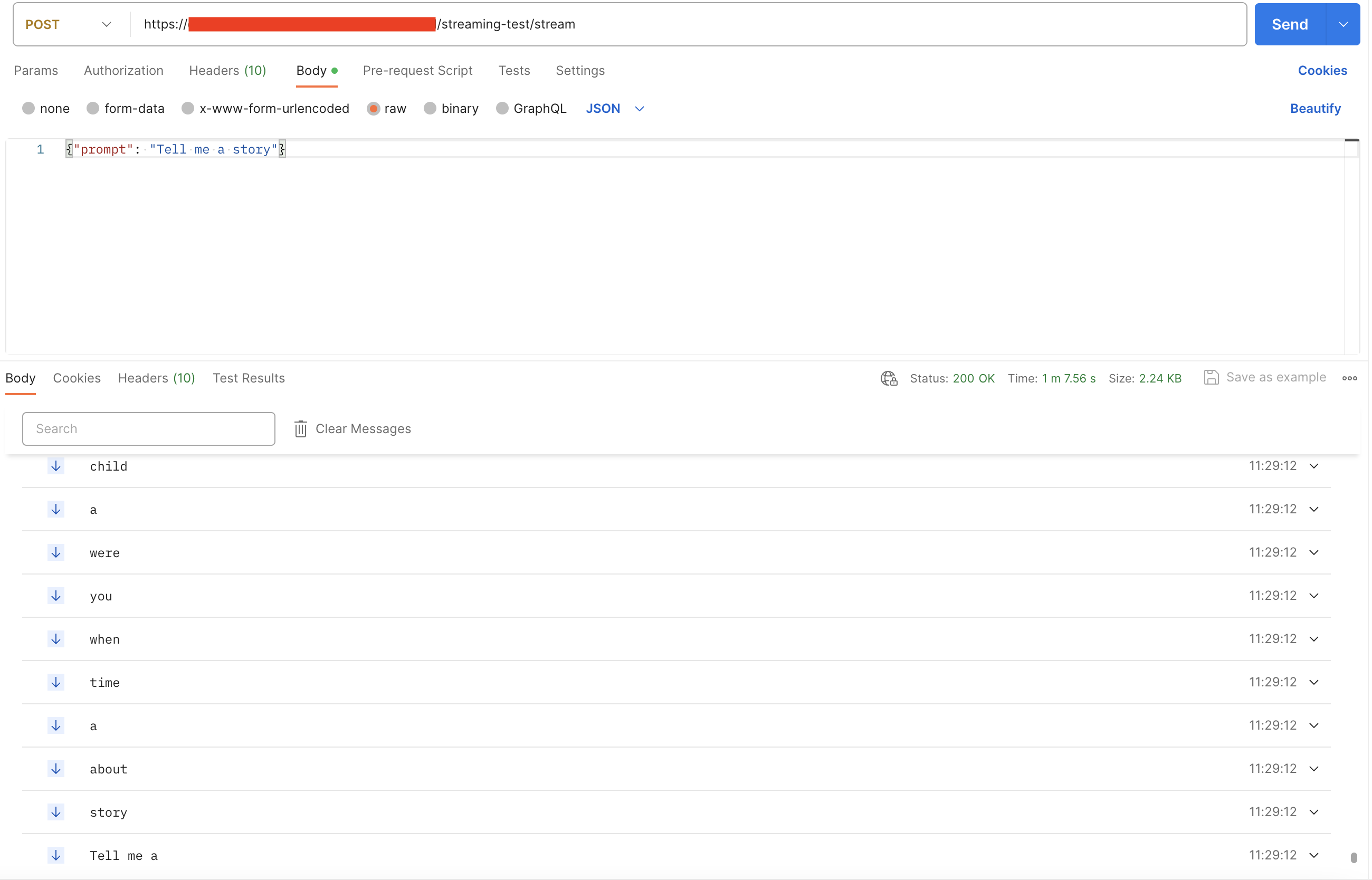

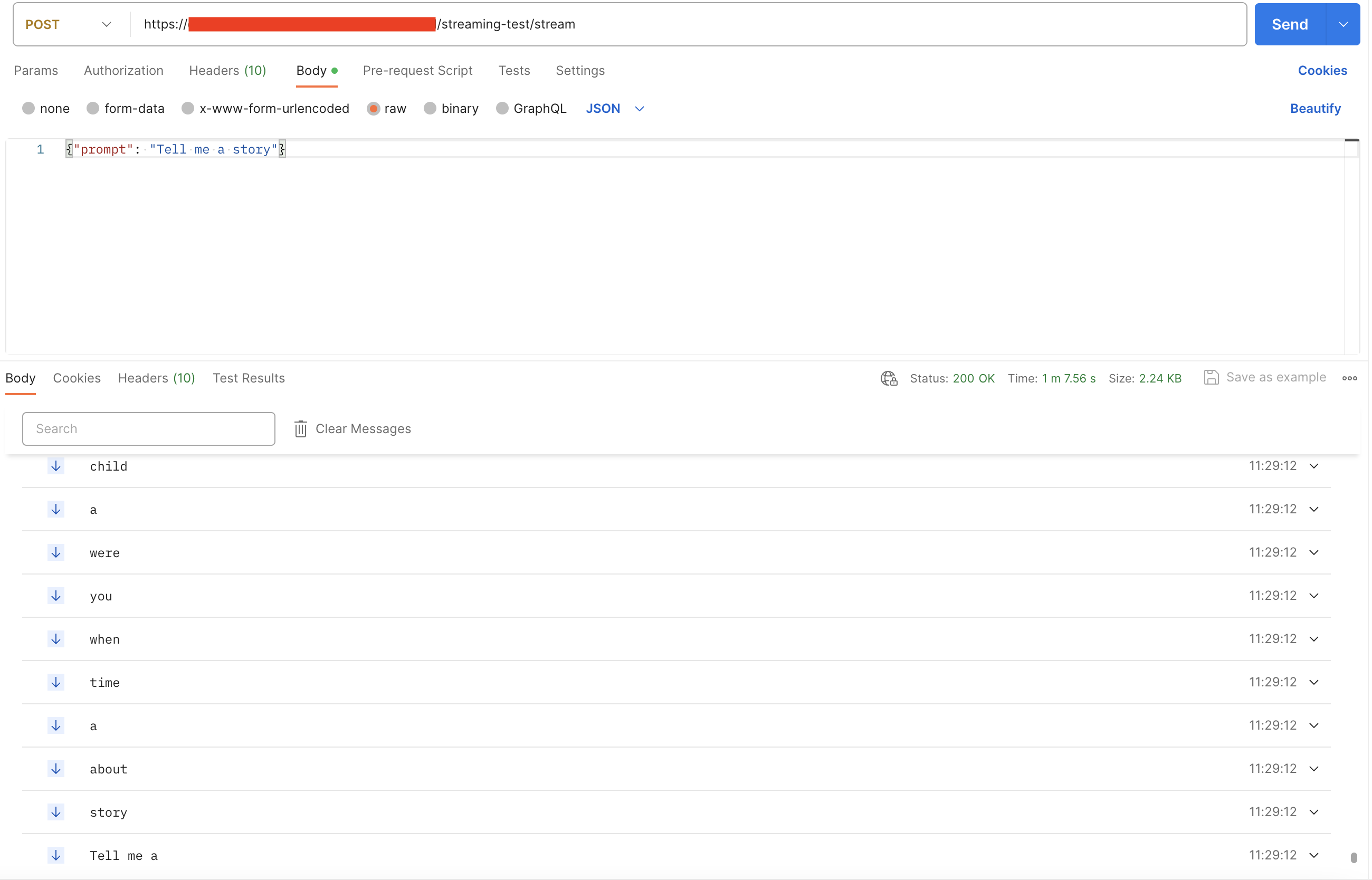

yield data, which is sent downstream via the text/event-stream Content-Type.

Data can be sent in JSON format and decoded on the client side.

A minimal example:

yield data, which is sent downstream via the text/event-stream Content-Type.

Data can be sent in JSON format and decoded on the client side.

A minimal example: